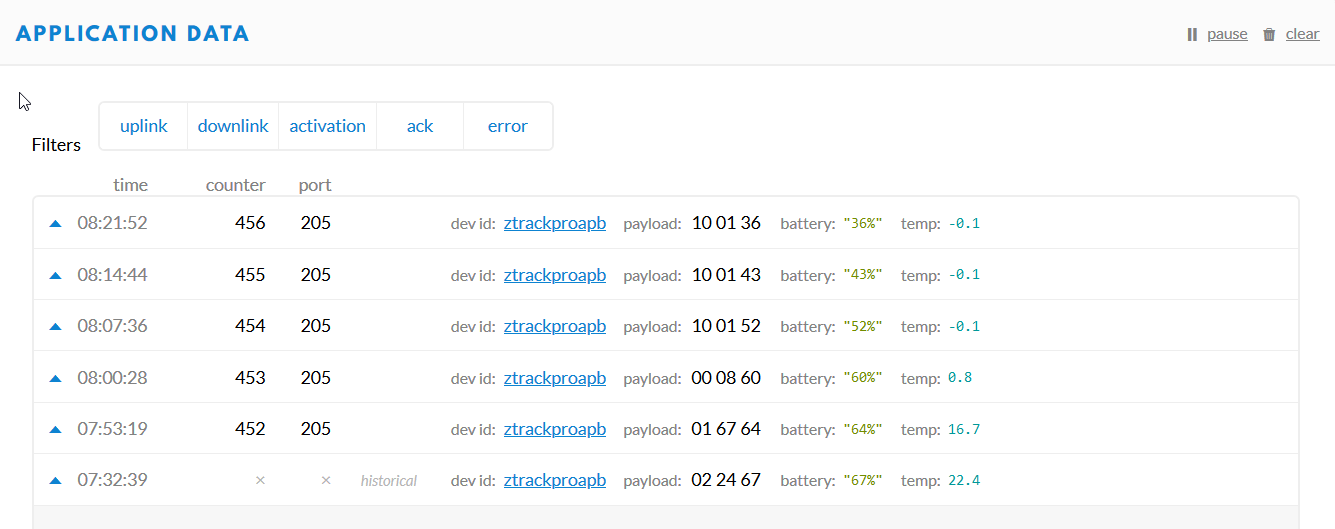

I was wrong: the device is also using packed binary-decimal encoding, and only using 3 out of the 4 nibbles for that. So, looking at the full code, it only uses 12 out of 16 bits for the value, and then (2 out of) the remaining 4 bits for the sign:

temp = (bytes[8] & 0x0F) * 100;

temp += ((bytes[9] & 0xF0) >> 4 ) * 10;

temp += bytes[9] & 0x0F;

if( bytes[8] & 80)

temp /= -10;

else

temp /= 10;

Aside: this also implies that the temperature range is only -99.9 thru 99.9. What a weird encoding. (Two bytes could easily store a much larger range from -32,768 thru 32,767, and would need less magic to decode.)

Now, the decimal value 80 translates to the binary bit pattern %01010000 (whereas the hexadecimal 0x80 is binary %10000000). In the manual, it says:

Temperature:

- 1. character indicates negative or positive temperature (0 – positive, 1 – negative)

- 2. and 3. character is integer part of temperature

- 4. character is fractional part of temperature

So, it seems only the rightmost bit of those 4 leftmost bits matters. (Binary %00010000, or decimal 16, or hexadecimal 0x10.) Indeed, “a character” 1 matches one of the two bits in the decimal value 80, not caring about the other bit.

Still: it smells that the bit pattern has 2 ones! (And that a decimal constant is used, while hexadecimal constants are used for all other bitwise operators in that example.) So don’t just test for negative temperatures; bugs like this would especially affect positive values that erroneously happen to match that bit pattern.

In short: I guess it will work as expected, though I would have used the more strict and less confusing bit pattern 0x10 (also reserving the other 3 bits for future use).