Will adaptive data rate help the end device determine the best max data rate if the gateway is a distance away? I.e. if certain data rates are not sufficient for a given range/distance of communication, will the end device + gateway be able to work this out? Thanks for the help.

Yes, that is what it is intended to do.

Worth noting however that the logic is in the network server, not the gateway (gateways have very minimal internal logic). The network server considers the signal reports from any and all gateways receiving each packet, and tells the node to adjust settings for those conditions - on average at least, the propagation path to the best gateway.

That’s in part why it’s problematic if a gateway suddenly vanishes, or receives on less than all of the channels, as those strong signal reports may lead nodes that were close to it to be told to use short-range settings which leave them no longer be able to reach more distant gateways that might still be in operation, and able to receive on all the channels that a proper gateway should.

Nodes also have some of their own fallback logic where they start moving towards longer range settings if they don’t receive any feedback from the network over an extended period of time, on the order of hours to days.

How does a gateway signal to a node that its packets are not reaching that gateway in the first place? I mean, if the node is using a higher data rate than it should and is thus not able to reach the gateway, then obviously the gateway cannot send a downlink message to the node to adjust its data rate since it doesn’t know that node even exists. Do nodes using ADR start with a high data rate and then send recurring packets with lower and lower data rates?

Again, gateways really don’t do anything. The Network server acts through gateways.

It cannot, of course

So nodes start in the most long range mode (high spreading factor, ie low data rate, and high RF power) and then may be commanded to go to a shorter range mode if the received signal indicators suggest that would be workable.

This would be a less effective strategy. However, this does happen when a short range mode that was previously working ceases working. Perhaps a nearest gateway has gone down, or perhaps the node has moved. Of a substantial period of time without response, it will start ramping to longer range modes.

Of course it’s possible that the network is dead entirely (server problems) or corrupted (sessions keys lost by server) in which case the longer range mode isn’t going to work either. But at least the node tried…

You may see this for OTAA. Like EU868 LMIC first tries the default channels at some initial data rate that is configured in the device. This may very well be the fastest data rate available (SF7BW125). When all default channels fail, LMIC will decrease the data rate one step, and try again.

This is fine for an OTAA Join, as for such the node knows it should always receive a downlink for each uplink, so it knows when things failed. But for ADR, it may take as many as 96 uplinks to detect that no downlink was received. According to the 1.0.2 specifications:

4.3.1.1 Adaptive data rate control in frame header (ADR, ADRACKReq in FCtrl)

[…]

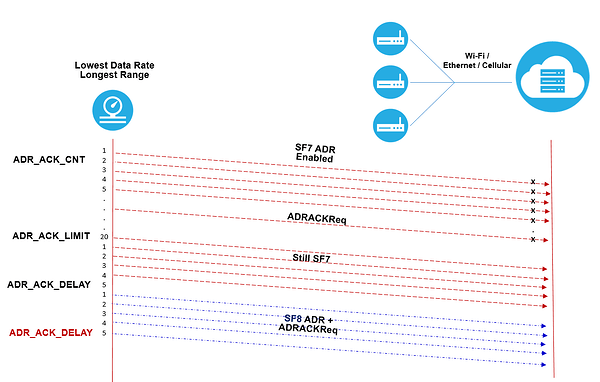

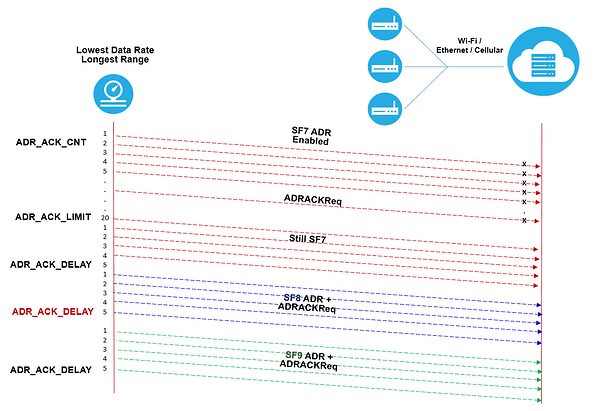

If an end-device whose data rate is optimized by the network to use a data rate higher than its lowest available data rate, it periodically needs to validate that the network still receives the uplink frames. Each time the uplink frame counter is incremented (for each new uplink, repeated transmissions do not increase the counter), the device increments an ADR_ACK_CNT counter. After ADR_ACK_LIMIT uplinks (ADR_ACK_CNT >= ADR_ACK_LIMIT) without any downlink response, it sets the ADR acknowledgment request bit (

ADRACKReq). The network is required to respond with a downlink frame within the next ADR_ACK_DELAY frames, any received downlink frame following an uplink frame resets the ADR_ACK_CNT counter.

In LoRaWAN 1.0.x, for most (if not all) regions, ADR_ACK_LIMIT is 64, and ADR_ACK_DELAY is 32. (In LoRaWAN 1.1, it’s a configuration.)

When a device detects it has missed an expected downlink then at worst it already had 96 uplinks not being received, or at best simply only missed the ADR-triggered downlink. It may want an aggressive strategy to ensure it might actually still be in range, or change its settings to get in range again. But according the the same specifications it seems that a certified device should only decrease its data rate by one step and wait another 32 uplinks:

If no reply is received within the next ADR_ACK_DELAY uplinks (i.e., after a total of ADR_ACK_LIMIT + ADR_ACK_DELAY), the end-device may try to regain connectivity by switching to the next lower data rate that provides a longer radio range. The end-device will further lower its data rate step by step every time ADR_ACK_DELAY is reached. The ADRACKReq shall not be set if the device uses its lowest available data rate because in that case no action can be taken to improve the link range.

Aside: the above is also why ADR-enabled devices that have trouble receiving downlinks will eventually end up at DR0.

That is not necessarily true! Many devices start at SF7 - short range - especially for initial OTAA join requests and start requesting network access and config info. After a few attempts with no success the node then falls back to SF8 and tries cycle again, on no success SF10, and so on until SF12 attempts. There is much debate about which policy (Start at SF7 and back off or start at SF11 or 12 and ramp up - probably under ADR control) leads to the lowestest early life battery power consumption. It will depend on deployment scenarios and how fast any given network starts to adjust node settings under ADR control. I believe TTN (IIRC) waits for around 20 messages before adjusting under ADR. There is also some guidance/network policies that ‘ban’ (discourage?) network joins at e.g. SF12, and of course the RX2 back up window on TTN is set at SF9 ![]()

As for the data rate for OTAA, Semtech writes in Joining and Rejoining:

In addition to random frequency selection, randomly selecting available DataRates (DRs) for the region that you want is also important.

Note: It is important to vary the DR. If you always choose a low DR, join requests will take much more time on air. Join requests will also have a much higher chance of interfering with other join attempts as well as with regular message traffic from other devices. Conversely, if you always use a high DR and the device trying to join the network is far away from the LoRaWAN gateway or sitting in an RF-obstructed or null region, the gateway may not receive a device’s join request. Given these realities, randomly vary the DR and frequency to defend against low signals while balancing against on-air time for join requests.

And as for ADR acknowledgements:

The same strategy is indeed explained in Semtech’s Understanding ADR:

If no answer is received from the network server by the time the acknowledgement delay period has expired, the data rate will automatically be reduced by one step, as illustrated in Figure 8.

Figure 8After this one-step data rate reduction, the end device continues sending messages to the server (at this new, lower, data rate) requesting an ADR acknowledgement. Once an acknowledgement is received, the end device uses the new, server-determined, data rate until it receives an instruction to change it again via the normal ADR mechanism. However, if no answer is forthcoming, the end device continues to ratchet down the data rate, step by step, until it gets a response or, assuming the device and network are in Europe, until it reaches SF12 (Figure 9).

Note: The highest spreading factor that can be used with LoRaWAN varies by region, for instance in the USA, the highest SF is SF10.

Figure 9

Just like in the LoRaWAN specifications: not a word about the transmission power.

Some join sequences seem to hit the same SF several times before moving on - a quick run through from to 7 to 0 would seem more efficient, possibly trying a couple of times at the 7, 8 & 9, travelling hopeful, before using up air time & battery banging away at SF12 in vain.

Then perhaps backoff for many multiples of the configured send interval rather than fruitlessly trying just a few moments later.

I don’t understand the logic of ADR:

If I keep sending unconfirmed messages, that means I don’t need to any downlink acknowledgment.

So even if I don’t receive the downlink after 64+32 uplinks, it doesn’t mean that I need ADR to make any adjustments. Isn’t that against my original intention?

The point of ADR is to tune a devices signal to its environment by changing the Data Rate.

If it’s being received with great signal strength, then the DR can be dropped, using less power for the device and less air time for the community as a whole.

It’s the device transmission that’s key here, not downlinks.

It’s unrelated to unconfirmed messages - which are the best for the community as well.

My understanding of ADR is that it needs the response of the network server, which is what I call the downlink.

I learned before that the unacknowledged message will never receive the downlink.

Combining the two, I feel that the ADR conflicts with the unacknowledged message, because the ADR will never receive the response from the network server.

You are conflating the two things which are separate.

You can ask for uplinks to be confirmed or not - not being preferable. On receipt of a confirmed uplink, the gateway will send back a ‘got it’ message.

If you have ADR turned on, at some point (20 or 30 messages in), the network server will evaluate your signal strength and if need be, queue an ADR MAC request as a downlink. For a Class A device, this should be picked up when the next uplink occurs.

I’m not sure why you say that a device will not receive a downlink? It’s independent of uplink confirmations.

I got it.

The downlink of ADR has nothing to do with the uplink confirmation.

Thanks a lot!

They’re independent, but I believe in an ideal case they could share the same packet downlink packet.

Though that’s kind of irrelevant as you really really shouldn’t be using confirmed uplink